Is It Possible We All May Share The Same Reality Again Thanks To AI

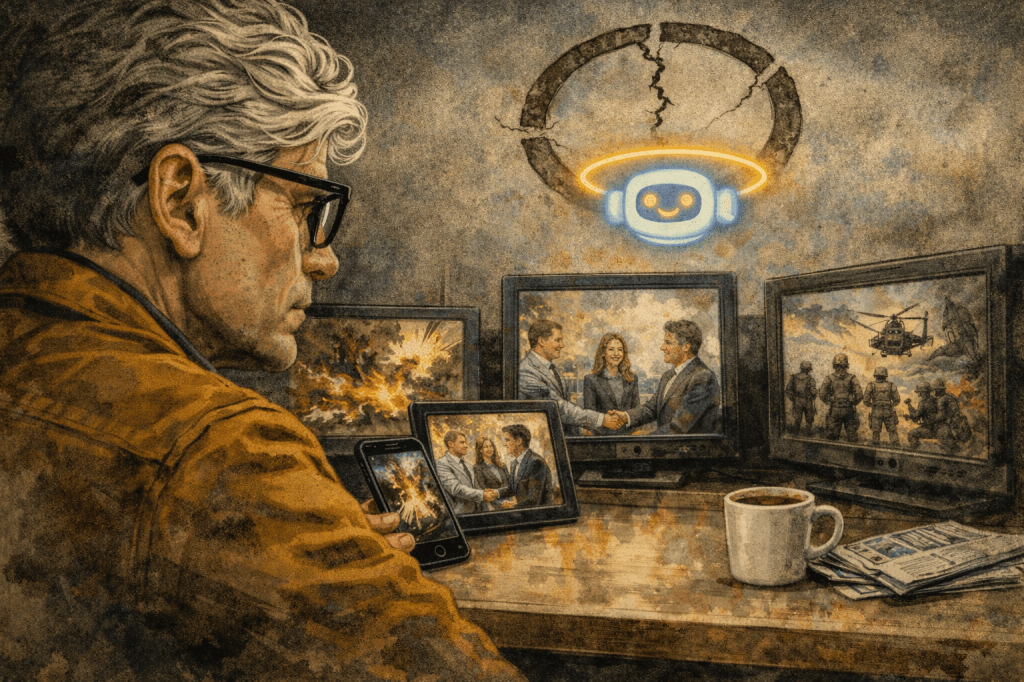

I was driving to yet another baseball tournament Saturday morning, half-awake, NPR on in the background, when a story about AI disinformation in the Iran conflict completely hijacked my brain for the next forty-five minutes.

The segment was trying to walk through the landscape of fake images circulating on social media — content pushed by the Iranian regime, by Israeli military PR, by various actors with various agendas — and which images news outlets were calling out as fabricated versus which were actually real photographs being labeled as fake by people with something to gain from the confusion. It was genuinely important journalism. It was also, I have to be honest, nearly impossible to follow. I kept losing the thread of what was supposedly fake and what was real, and by the end I wasn’t sure I was any clearer than when I started.

And I don’t think that was NPR’s fault.

That’s kind of the point.

Here’s what’s actually happening on the ground in the Iran conflict, and it’s worse than I’d realized. Researchers have documented something they’re calling the “liar’s dividend” — a term that’s as bleak as it sounds. Both sides, Iran and Israel, have been flooding social media with AI-generated war footage: fake missile strikes, fabricated protest crowds, synthetic images of cities in flames. Iranian state television broadcast AI-generated images of Israeli F-35s allegedly shot down over Iran. Pro-Israel accounts circulated fake videos of Iranians cheering “We Love Israel” — equally fabricated, equally absurd. One pro-Iran disinformation campaign alone generated over 145 million views in a matter of days.

But here’s the part that really got me: authentic, real photographs from Tehran — actual documented images of actual destruction — were being dismissed by real people as AI-generated fakes. The loop doesn’t just push fake things as real anymore. It makes real things look fake. Researchers call it the liar’s dividend because it pays out even when you’re telling the truth: just raise enough doubt, and nobody believes anything.

So when I’m sitting in my car trying to follow a radio segment about which of these images is real, and I end up more confused than when I started — that’s not a failure of journalism. That’s the system working exactly as the people flooding it with garbage intended.

This brought me to something I’ve been turning over ever since.

There’s a specific flavor of the word “fake” that has been weaponized over the last decade, and it’s worth separating it from the actual problem we’re talking about. Inaccurate reporting is a real thing. Bias is a real thing. Sloppy journalism is a real thing, and it deserves criticism. But calling accurate reporting you don’t like “fake news” — labeling an entire free press as the enemy of the people, pressuring networks, having your supporters harass reporters — that’s not a media critique. That’s the authoritarian playbook. It’s what happens in Russia. It’s what happens in China. It’s what happened when the Trump administration spent years systematically undermining trust in any outlet that reported things it didn’t want people to believe.

And now we have Red Hat money flowing into CBS through the Skydance deal, with Shari Redstone selling to people with a demonstrated interest in friendly coverage. We have network executives pre-emptively softening coverage they think might cause problems. The “fake news” attack on the press didn’t have to be true to work — it just had to plant enough doubt that when actual, AI-generated fake news arrives at industrial scale, nobody has the credibility left to say this time it’s actually real.

That’s a hell of a setup.

The thing is, I’ve written before about how we got here economically — how the advertising model that once funded serious journalism got hollowed out, how the old network era, for all its limitations, at least operated under the assumption that there was one shared set of facts that Walter Cronkite was going to read to everyone at 6:30. Three networks, same stories, same footage, more or less the same reality. You might argue about what it meant. You couldn’t argue about whether the moon landing happened.

That world collapsed for a lot of reasons — cable fragmentation, the internet, the collapse of local newspapers, the rise of the engagement economy where outrage is the product. And into that vacuum walked social media, influencers, partisan content farms, and now AI-generated street interviews of people who don’t exist saying things that mean nothing, which rack up 14 million views before anyone figures out they’re fake. (This actually happened last year, by the way — a viral clip made with Google’s Veo 3 showed two young women outside a club spouting word-salad Gen Z nonsense about AI. Completely fabricated. Millions of views. People argued about it in the comments. Someone probably put it in a slide deck.)

And AI-generated fake Tom Holland and Zendaya wedding photos got 10 million likes — even though the creator labeled them as AI in the caption. Even Zendaya’s own friends thought they’d been left off the guest list for a wedding that never happened. She had to go on Kimmel and tell people “Babe, they’re AI.”

We are well past the point of “you should be more skeptical online.” The technology has lapped that advice.

So here’s the thing I kept coming back to on that drive, the thought that pulled me out of the doomscrolling-but-with-my-ears spiral.

Maybe this breaks the other way.

There’s actually research on this now — a field experiment published by the Centre for Economic Policy Research that found something counterintuitive: when people become more aware of AI-generated misinformation, they place more value on outlets with established credibility, not less. The hunger for something you can actually trust goes up when everything around it looks suspect.

Which brings me to the full circle part.

If you genuinely want to know what is real — and I want to be clear that I think this is not everyone, and that’s the scary part — but if you actually want to know what is real, where do you end up? You end up back at the places that have institutional processes for verification. Editors. Photographers with verified credentials submitting original files. Reporters who will have their names attached to what they write. The New York Times. NPR. PBS NewsHour. The BBC. The wire services — Reuters and AP — that still operate on the old model of: you get the story right, and you don’t get the story wrong, because your entire business is being the place other outlets trust.

This is not a nostalgic argument for the three-network era. Those outlets had their own blind spots and biases, some of them significant. But they operated under a set of professional norms that at minimum required the content to be real. You couldn’t put an AI-generated image on the CBS Evening News in 1978 because the technology didn’t exist, but also because someone’s career would have ended.

That accountability structure still exists at a handful of places. And I think — I hope — that as the information environment gets noisier and more polluted and harder to navigate, the people who actually want signal are going to find their way back to the sources that have earned the right to be trusted through decades of doing it right more often than not.

Maybe that’s optimistic. The research suggests it’s possible, but possibility isn’t probability. The echo chambers are loud and they are sticky. A lot of people don’t want signal — they want confirmation, and AI can provide infinite confirmation at zero cost.

But here’s where I land: the influencer economy, the social media free-for-all, the content farm hellscape — none of it built institutional trust. You can’t crowdsource credibility. The places that have it earned it the slow, boring, expensive way, by having standards and enforcing them and issuing corrections when they got it wrong.

If AI disinformation keeps scaling the way it’s scaling, and the lines between real and fabricated keep blurring the way they’re blurring, the only places left standing with a legitimate claim to we checked this are going to look a lot like the places that were already there. NPR. PBS. The Times. The wire services. Maybe a few others.

Full circle. Not because they’re perfect. Because they’re the ones who still show their work.

I guess the danger is that by the time people figure that out, enough damage will have been done that it won’t matter. But I’m choosing to believe most people, when they’re really honest with themselves, actually want to know what’s real. Maybe that’s naive. It’s certainly possible. It’s the only version of this story with a decent ending.

Leave a comment